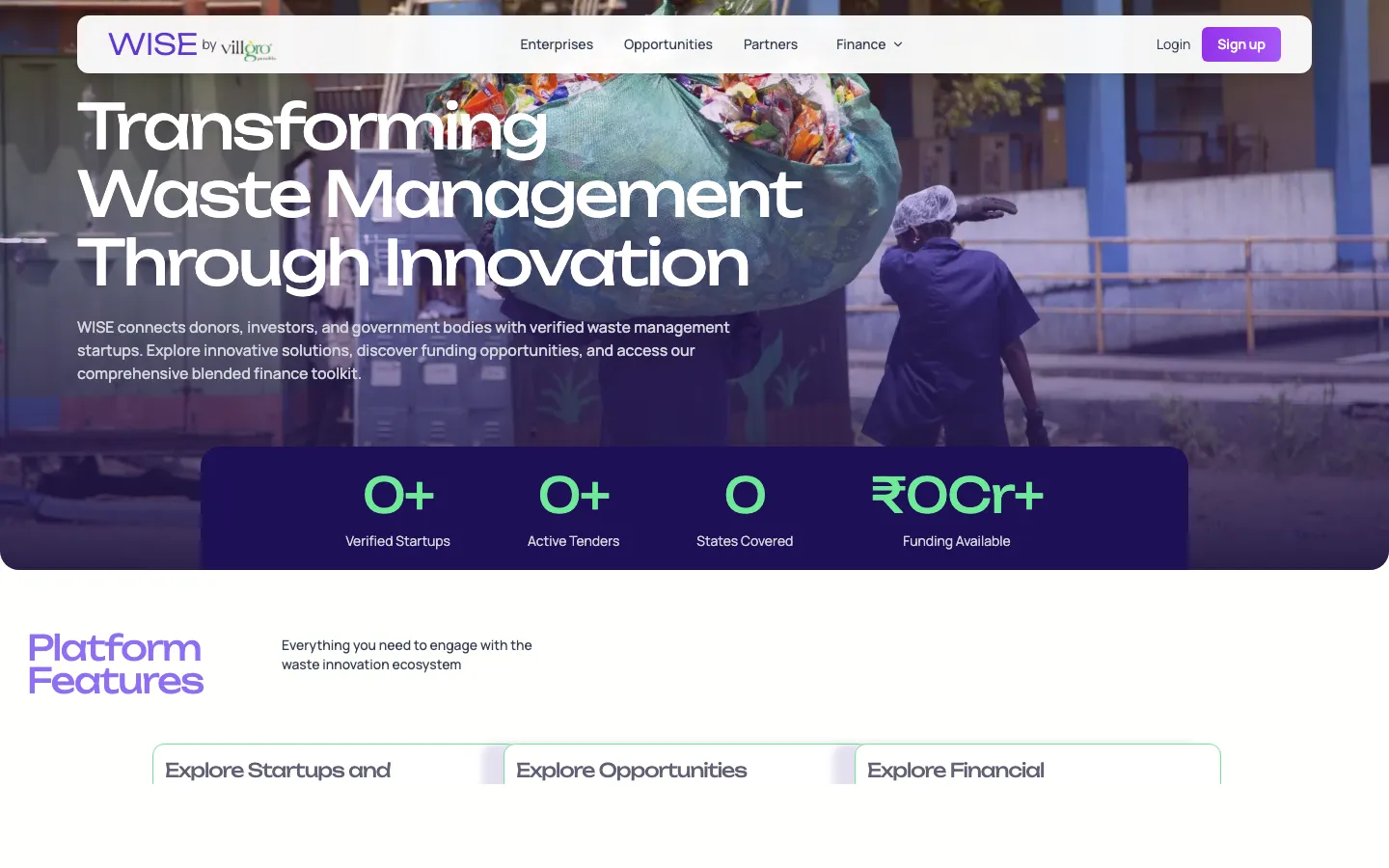

The brief

Villgro — India’s largest social-enterprise incubator — needed a brand-new platform for their WISE programme: one place where waste-management startups, government bodies, investors, and donors could actually find each other.

Three surfaces had to coexist on day one: an enterprise directory of vetted startups and ULB-partnered projects, an opportunities hub aggregating government tenders that would otherwise live buried on the Central Public Procurement Portal, and a finance layer surfacing live accelerator, grant, and fellowship openings.

The unlock

The product wasn’t possible without solving one very specific problem: the Central Public Procurement Portal (CPPP) — where every central-government tender in India is published — doesn’t offer a usable API. Tenders sit behind search forms, paginated result sets, session-bound detail URLs, and a CAPTCHA.

We built an automated scraper that runs daily at 02:30 IST via Celery, using Selenium + Chromium to drive the CPPP UI and Tesseract OCR with image preprocessing to solve the CAPTCHAs. The scraper handles 10+ date-format variants (tender publishers are not a consistent bunch), upserts against a (source, external_id) unique constraint so re-runs are idempotent, and generates stable detail-page URLs via a double-base64 scheme derived from the tender’s reference number — bypassing CPPP’s session-bound links so Villgro’s users can share, bookmark, and return to a tender long after the session expires.

A second Celery beat job at 03:30 IST auto-archives any approved tender past its bid-submission deadline, so the public directory never shows expired opportunities.

The moderation layer that makes the scraper trustworthy

Raw scraped data is noisy. Every scraped tender lands in a pending state and flows through a moderation queue before it’s ever visible to end users. Moderators (Villgro staff) approve, reject, edit, or archive each item — and every action is recorded in a ModerationAction log with the acting user, the comment, and a diff of any edits. Only approved tenders appear in the public API.

The same pattern applies to enterprises and funding opportunities — is_published flags and verification status are first-class fields, not afterthoughts.

The three user-facing surfaces

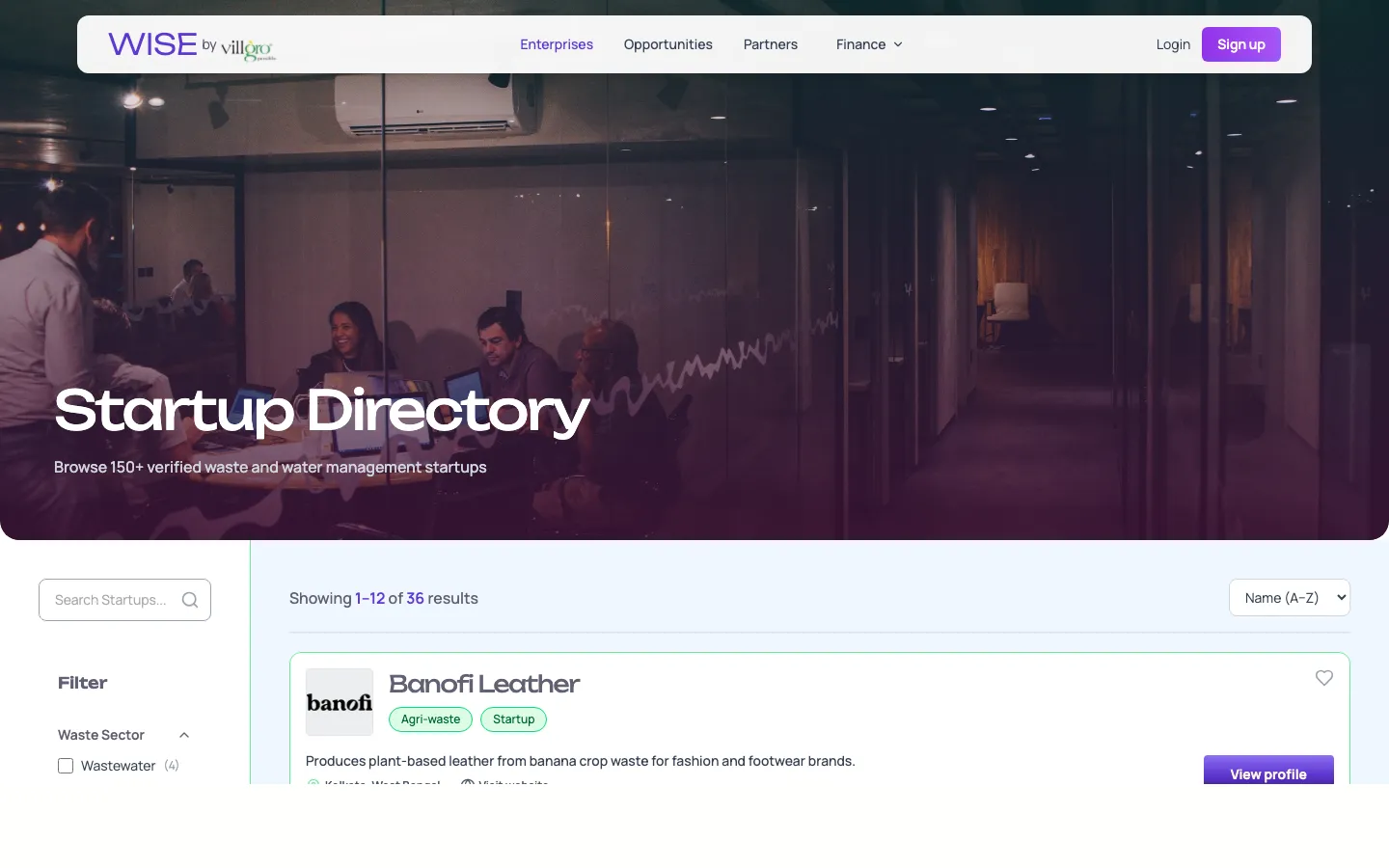

Enterprise directory

Filterable by waste sector, enterprise type (startup or ULB project), state, and ULB-partnership status. Each entry has a slug-based detail page with 18+ structured fields — team, traction, impact metrics, contact info — backed by a publish/verify workflow so Villgro’s team controls what’s live.

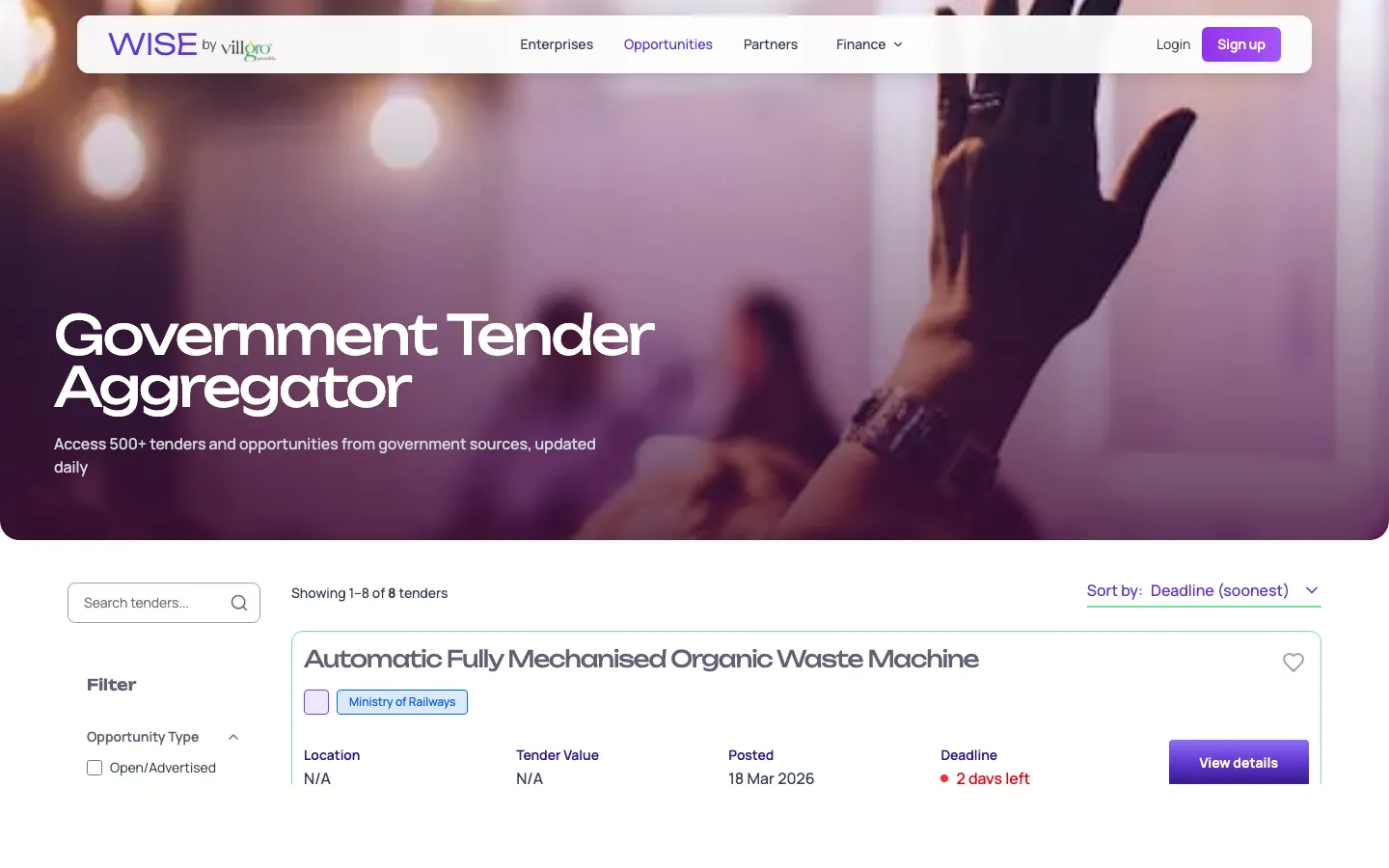

Opportunities hub

Faceted search across tenders with filters for category, location, type, waste sector, and deadline buckets (next 7 / 30 / 60 days). Sorting by deadline or tender value. Individual tender pages cross-link to related enterprises — so a ULB tender for organic-waste processing surfaces the startups in the directory that could bid on it.

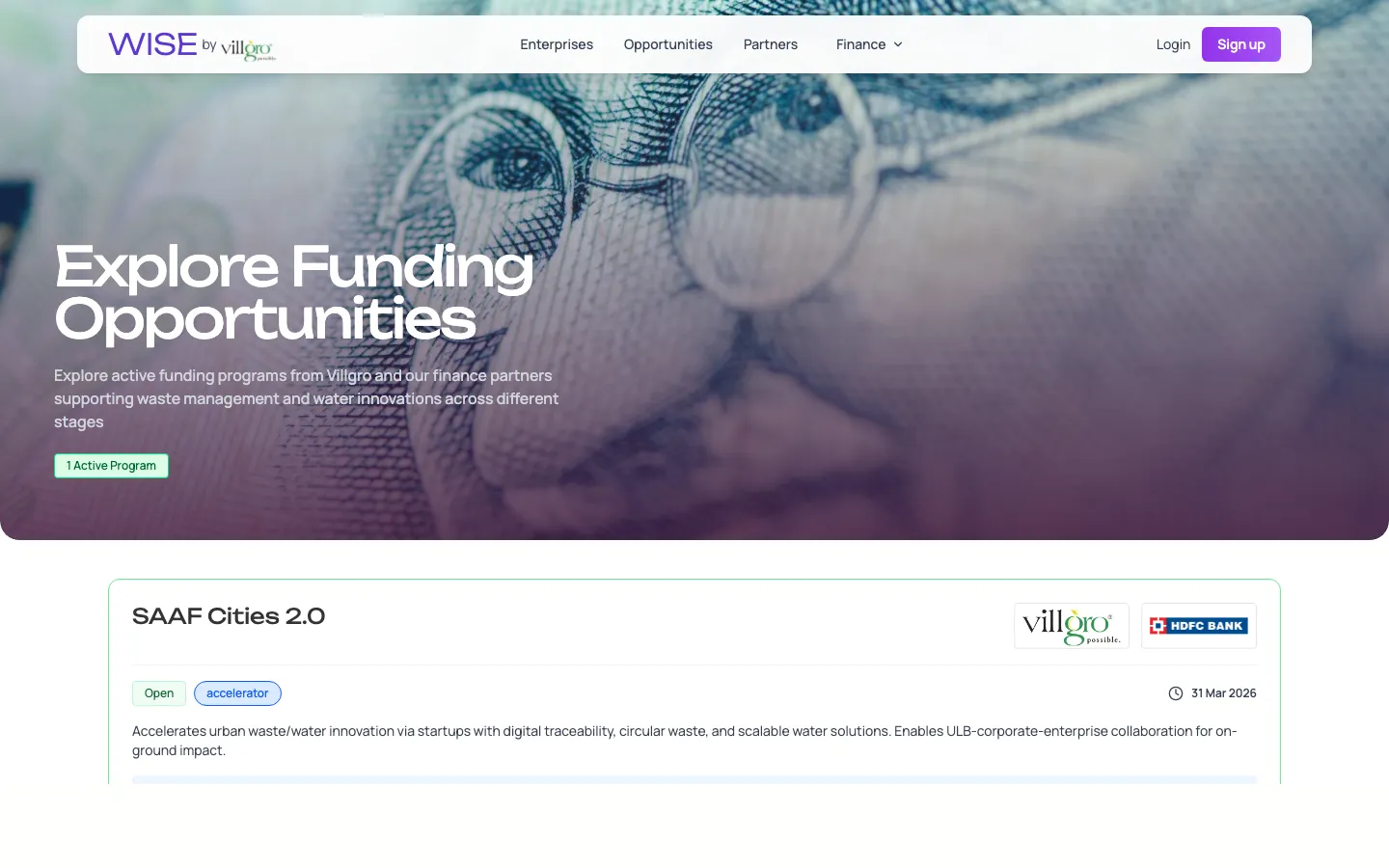

Finance

Curated accelerators, grants, and fellowships with deadlines, eligibility rules, partner logos, and social-proof posts. Managed through the Sanity-style editorial workflow the Villgro team owns directly.

And the connective tissue

Authenticated users can save enterprises and opportunities, request meetings with enterprises (with a pending → confirmed → declined status machine), and manage their own profile with a user-type taxonomy (startup / investor / donor / ULB / researcher) that drives which sections of the site feel relevant to them.

Engineering decisions worth calling out

Auth via httpOnly cookies, not localStorage tokens. Short-lived access cookies (60 min) and rotating refresh cookies (7 days) with blacklist-on-rotation. The frontend never sees a token; the Axios client queues concurrent requests during a silent refresh and only surfaces a login redirect if the refresh itself fails. Social login via Google and GitHub flows through the same cookie mechanism.

Celery safety by default. 600-second hard timeouts, acks_late so work isn’t lost on worker crashes, prefetch_multiplier=1 so a single stuck scrape doesn’t block the queue. Task-failure signals fan out an admin email via Resend — so if CPPP changes its markup, Villgro hears about it before users do.

Production containerization that takes compliance seriously. All containers run as non-root (appuser, UID 1001) via gosu. Docker images are pinned to specific versions, not tags. Nginx fronts the stack with HTTP/2, HSTS, and security headers. TLS via Let’s Encrypt + certbot, renewing automatically. The entire stack lives on a single EC2 box with resource limits and log rotation configured at the compose level.

112 tests, split across API and scraper. 66 API tests cover the endpoints end-to-end; 46 scraper tests validate the CPPP parsing logic against frozen HTML fixtures — so when CPPP’s layout shifts, the test suite catches it before production does.

How we approached it

- Schema first, UI second. The backend models — enterprise, opportunity, funding opportunity, moderation action — were designed against the workflow Villgro’s team actually runs, before any React was written. That meant fewer pivots once components started landing.

- Aggregator before directory. The tender scraper was the first production-ready module. Without it, the platform is a listings site; with it, the platform is a utility startups actually return to.

- Moderator-first data model. Nothing scraped appears publicly without human approval. The audit log, the per-action comments, the published/verified flags — they all exist so Villgro can point to a governance story when partnering with government bodies.

The team

From MakaraTech: a designer, a delivery manager, an architect, a senior engineer, and two junior engineers.